What is serverless computing?

Serverless computing is an execution model where developers can write and deploy code without worrying about the infrastructure that it will run on. Instead, a cloud services provider is responsible for managing, provisioning, and maintaining servers and charges using a pay-for-compute model.

The term ‘serverless computing’ is a misnomer, however: while the user doesn’t own a server, servers are obviously used in a cloud computing model to provide machine resources on demand.

Defining a serverless computing model

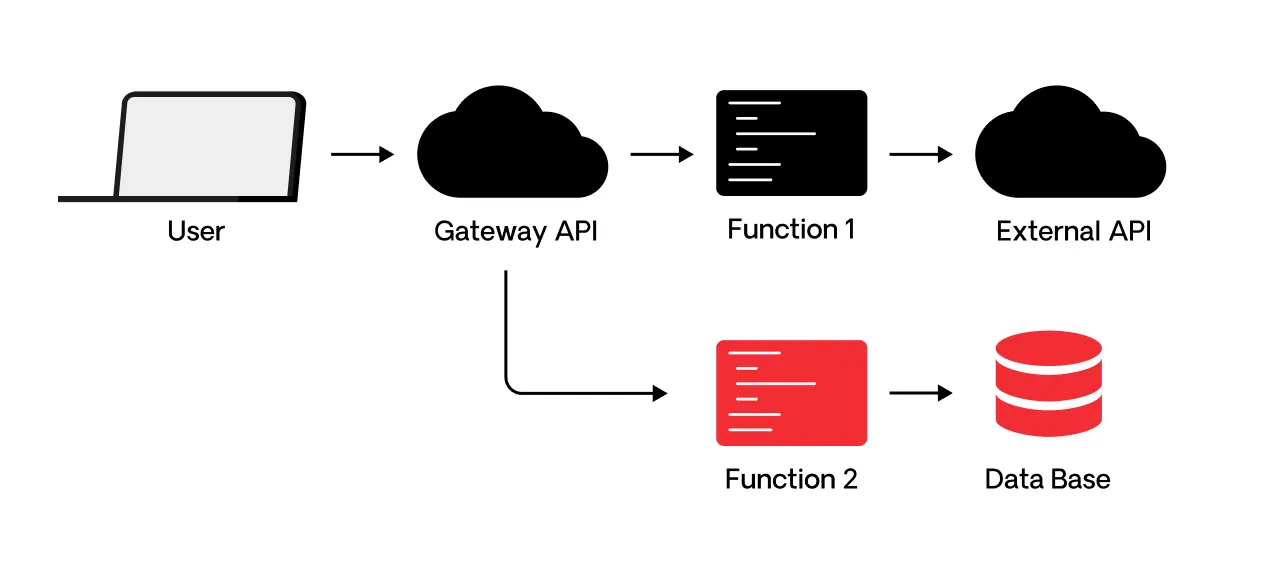

The key to the serverless model is that the developer creates applications in small pieces of code that are executed by the cloud service provider as functions-as-a-service (FaaS). FaaS provides an event-driven architecture in which functions are triggered by specific events such as HTTP requests, message queues, sensor data, or a mouse click, among others. This model is well-suited to microservices, where the code is already focused on small bits of functions that communicate through APIs.

Serverless computing cloud service providers use a pay-for-compute model that allows customers to rapidly scale applications when they are triggered and stop using them whenever they are no longer needed, immediately scaling them back down and not holding them in volatile memory.

When application resources are no longer needed, they are scaled down to zero and the application ceases running. The customer pays only for the resources they need, and only when they are needed. In this way, customers can have access to large amounts of computing power without owning the responsibility for resource management.

Serverless computing functions are essentially stateless, not retaining any data from earlier instances. Where latency for applications is an issue, companies should evaluate whether serverless computing is the right choice, as it may take seconds for instances to spin up from a cold start.

This stateless existence also means that any persistent data that is necessary for the application must be stored elsewhere. All of the major cloud vendors who offer serverless computing offer databases and storage services that can be triggered by software functions.

Together, serverless computing, microservices, and containers are considered the heart of cloud-native application development technologies.

FaaS (function as a service) is the core of serverless computing

Function as a service is a subset of serverless computing and is essentially the core of it. FaaS allows developers to write code that executes in response to an event. The code only tackles the business logic, not any of the complex infrastructure, which is handled by the cloud service provider.

All of the typical back infrastructure for hosting and launching an application over the internet — the physical hardware, server management, provisioning, web hosting, and virtual machine operating system — are all fully managed instead by the cloud service provider. Under the FaaS model, developers can create modular code to be managed as small pieces of executable code. For fast response, the code can be managed on the network edge.

FaaS offers many compelling attributes, including:

- Provisioning time measured in milliseconds

- No administrative burden

- Elastic scaling, including automatic scaling to zero

- No capacity planning necessary

- 100% managed maintenance

- High availability and disaster recovery with no extra effort or cost

- 100% resource utilization since there are no idle states

- Cost granularity in units of 100 milliseconds

Other service models related to FaaS

Backend-as-a-service (BaaS) is a service model that includes access to third-party services and apps for activities such as user authentication, push notification, extra encryption, or cloud accessible databases, so that developers can focus on writing the front-end code for applications. All of the backend, behind-the-scenes processes of mobile and web applications are outsourced to the cloud service provider.

In BaaS, serverless functions are usually called through APIs. Unlike serverless computing, BaaS applications are not designed to scale unless the provider offers this ability, and the developer writes the code with this in mind.

Platform-as-a-service (PaaS) is a model where developers rent from the cloud platform provider all the tools needed to develop and deploy applications. This includes the operating systems and necessary middleware. PaaS applications won’t scale automatically unless they are programmed to do so. Unlike with serverless applications, which start instantaneously, PaaS applications must run most of the time in order to be available immediately upon demand.

What are the pros and cons of serverless computing architecture?

Pros:

- Cost: Serverless computing allows customers to save on hardware investments and on the cost of maintaining systems in production. Instead of paying for resources that remain idle until they are needed, serverless computing starts and stops the meter as computer resources are triggered by events. This reduces operating costs, personnel costs, and associated costs such as installation, maintenance and support, and software licenses.

- Speed: Parallel processing workloads can run faster in a serverless computing environment than in other forms. Also, code can be run closer to the network edge, reducing latency.

- Elasticity: The serverless architecture enables elastic computing, where resources scale up and down as needed. The cloud provider is responsible for managing this, including all systems, autoscaling, and provisioning.

- Development speed and productivity focus: Developers can focus on how best to develop code from a business perspective and can develop in the language or framework they are comfortable in. This allows developers more time to think through functionality and optimization to create better software. The DevOps cycle may be shortened since there is no need for developers to describe the infrastructure necessary for deploying code into production. The entire process of testing and deploying and having the app run smoothly is now the responsibility of the cloud service provider. This can help to deliver software faster, since dependencies related to software releases are reduced. Also, developers can quickly upload functions, strings of functions, or entire monolithic applications as fixes, new features, or new releases — however they choose to, including updating one function at a time.

- User visibility data: Serverless platforms provide a high degree of visibility of system and user times and can easily collect this information for analysis.

Cons:

- Performance: Applications that begin from a cold start have a lag time to spin up, which may degrade the application’s performance.

- Cost model doesn’t suit long-running processes: Serverless architectures are best suited for fast-spiking computing, rather than long-running code. Since cloud providers charge for the amount of time applications are running, the economics of serverless computing may not make sense with long-running applications.

- Debugging: The application testing and debugging process is more complicated than in traditional approaches because developers do not have the ability to replicate the serverless architecture to see how code will perform after its deployed.

- Resource limits and cost considerations: Some cloud service providers will impose limits on resources that would disqualify high performance computing from using serverless computing platforms. Serverless computing works best from a cost perspective for workloads that spike and scale frequently. Workloads that are predictable and long-running would be better suited for and better cost-positioned for a traditional server environment.

- Spin up times: Because apps are not running continuously, there is a short period of time for processes to start and scale up. While this latency may be acceptable for most functions, it will not be for others, such as a financial trading application that would require an immediate spin-up without delay.

- Security and privacy: It can be difficult to fully assess the security of a cloud provider. While the cloud provider has responsibility for protecting the cloud environment, customers are not able to install their own intrusion detection/protection software on the endpoint and network level. From a privacy standpoint, applications that handle sensitive data run the risk of exposure by external employees or through multi-tenancy situations where cloud providers have several customers on the same physical servers. The risk of data exposure is low, however, when servers are properly configured.

- Vendor lock-in: Cloud providers offer differing levels of service, functionality, and features that may ultimately make it difficult to switch vendors down the road. In general, the deeper a customer goes in taking advantage of the cloud vendor’s offerings, the more difficult it may be to switch.

What is the use of serverless computing?

With its ability to handle infrequent, unpredictable spikes in demand, and serve asynchronous, stateless apps that need to start immediately, there are a range of use cases that fit serverless computing. These include microservices, mobile backends, stream processing at scale, multimedia processing, batch job scheduled tasks, business logic, data streams, and chatbots.

Serverless computing providers

Amazon Web Services first successfully commercialized serverless computing in 2014. Since then, all major cloud service providers and a number of smaller vendors offer serverless computing platforms.

Among these are AWS Lambda, CloudFlare Workers, Google Cloud Functions, IBM Cloud Functions, and Microsoft Azure Functions.

Want to learn more about serveless and the world of cloud-native?

In our Cloud-Native Development: Ready or Not? Report, we surveyed more than 500 IT professionals and developers to help understand organizations' perceptions, concerns, and implementation plans regarding cloud-native.

Download the report to get a more in-depth look into how businesses deploy cloud-native applications and how low-code platforms, like OutSystems, fit into these strategies successfully.

Serverless computing frequently asked questions

Serverless architecture is a cloud computing model where the cloud provider manages the infrastructure, automatically provisioning and scaling resources as needed to execute and scale applications.

Adopting a serverless model influences several aspects of app development such as architecture, scalability, cost, and developer workflows.

In cloud computing, serverless components refer to services and features that enable the development and deployment of serverless applications. These components are typically offered by cloud providers and are designed to abstract away the complexities of server management..