A Container For Your App, My Dear!

“Our customers want to deploy OutSystems applications in containers; would you like to lead the team that will deliver this?”

This is how it all started.

Our customers had spoken. So had the analysts. OutSystems 11 would support containers. An engineering team was eager to get started, so where would I fit in?

Our head of architecture and head of development were asking me about my plans for the future. They reassured me that no matter what I decided, I would be able to fulfill the company’s strategy. This is a common practice at OutSystems: to look at someone’s set of skills and challenge them with something new. It's one thing I really like about our culture!

So, as you probably might have guessed by now, I said “YES!”

Rising to the Challenge

We had the people, but before we started diving into the topic of containers, it was important that everybody on the team have the same kind of knowledge about what we would be dealing with. Some of us had already read a few things about containers, some of us were just getting started, and some of us had used them in pet projects. But, we needed to get the basics right. Time for “Containers 101.”

Containers 101

So let’s have a quick look at the basics. These were the same basics we used to ensure everybody is on the same page: containers, container properties, and container orchestrators.

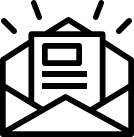

Containers Are a Lightweight Approach to Virtualization

They are like a super minimalist virtual machine with a smaller memory footprint. Containers do not run on a hypervisor; instead, they run on the container engine. Usually, a container includes only the bare minimum requirements for an application to run and function as intended:

- Application binaries

- Application dependencies

- Required libraries

Containers, unlike virtual machines, do not include an operating system. Instead, the container engine provides a virtual abstraction of the host operating system, which means that the host operating system is shared between containers.

Containers are Faster, Portable, and Enable Better Workload Density

Since containers are lighter and don’t require you to load a full operating system, starting them is much faster than starting a full virtual machine. Thus, achieving horizontal scalability with containers comes at the cost of a few seconds or less. Doing the same with virtual machines usually takes a few minutes, and that doesn’t even take into account the time required to set up the virtual machine.

How many times have you heard someone stating “It runs on my machine!” when talking about an application or a functionality? How many times have you felt sad because no, it doesn’t run on your machine; it just runs on their machine? Well, when you add containers to the equation, this is something that you will not have to worry about that often. If the application is running in a container, that application can run anywhere: in your container engine on-premises, in your container-as-a-service provider on the cloud, or wherever you have the container engine. Containers grant you a high-level abstraction from your infrastructure.

Finally, containers enable better workload density in an infrastructure. Since their memory footprint is lower because they don’t have to load the operating system, your container engine can fit more applications and workloads into the same hardware than you would if you were using a traditional virtual machine.

Container Orchestrators Are There to Work for You

A container orchestrator is a tool that automates the provisioning and management of your containerized infrastructure. On its own, this may sound very simple, but when we consider what they are really doing behind the scenes, it becomes clear that there is a lot more to it.

When you use containers, you have hundreds or thousands of components in your environment. In a traditional environment composed of bare-metal or virtual servers, you might have been able to get away with managing things by hand or using lightweight orchestrators that automatically pushed out configurations that you updated manually. But attempting to configure a containerized environment manually just won’t work in a production setting.

So, the orchestrator is there to deal with:

- Performance monitoring

- Load balancing

- Starting and stopping containers as needs increase or decrease

- Configuration management

- Routing

- Ensuring all applications work smoothly together

Getting Ready: Setting Up the Team and Brainstorming

Having covered the basics about containers, it was time for us to dive in!

Fast-forward a few weeks, and I found myself working closely with my new product owner: Tiago Leão. He had been gathering a lot of information from the market and our customers, investigating and understanding their needs, and it was time for us to start translating it into software.

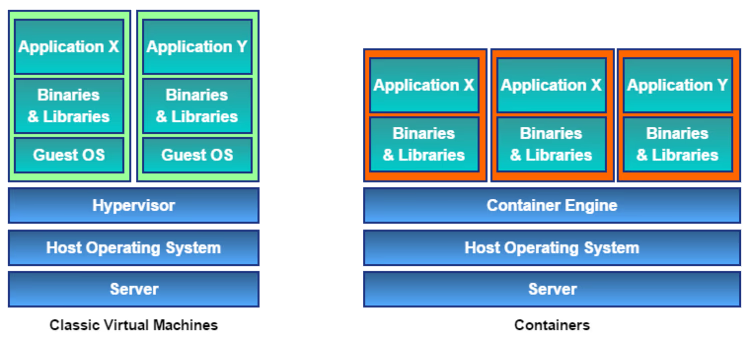

We gathered around a wall and started jotting down our ideas for the kind of work that would be needed. I really like working with him. Even though we have very different personalities, we have found our balance and, instead of clashing, we have always worked as if we were two sides of the same coin.

We stopped when we ran out of space on the wall. It’s a good thing that walls have finite amounts of space.

From an innocent bystander’s point of view, that wall looks like it has run away from an algebra class where the teacher has spent hours demonstrating that 1 is always different from 2. Okay, maybe it isn’t that bad; maybe it just looks as scary!

At this time, we called in the rest of the team, and they looked at the wall.

”This is going to require a lot of work!” they said. Well, they didn’t use those exact words, but there may be children reading this. At this time, we knew the amount of work we had ahead of us, but we really didn’t know how much fun we would have bringing it to life. That was yet to come!

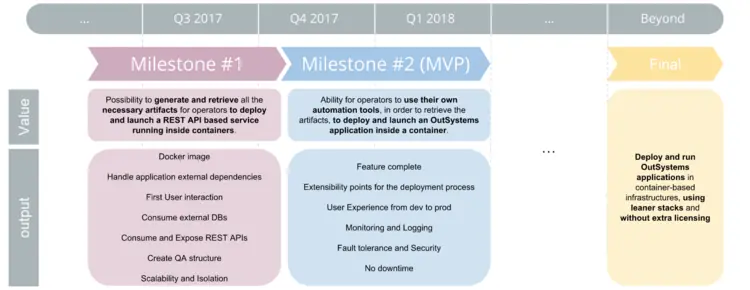

Between Concerns and Goals: A Wishlist

Every project has its own concerns and goals. Some of them are fueled by the market, others are powered by internal needs. Essentially, we had a good idea of what we wanted and an excellent idea of what we didn’t want.

What We Didn’t Want

We didn’t want the product to be tied to any specific container provider. OutSystems applications being deployed to containers would be something new, so we didn’t want to select the wrong container provider. I mean, what would happen if that container provider went out of business? We couldn’t go that way.

We didn’t want the core product to behave differently when an application is running inside a container. I mean, isn’t it an OutSystems application, after all? Why should it be managed differently? We couldn’t go that way.

And we didn’t want to force our customers to have to learn a whole new set of concepts just because an application is running in a container. We take learnability very seriously. We don’t want people to go through a bunch of training just because we’re introducing something new. So, unless there was a really good reason, we weren’t very keen on adding that additional burden. Why would we want to go that way?

What We Did Want

We wanted to target enterprises that already use containers. Containers might be new for us, but they definitely aren’t new for the people who have been using them for years. They have their infrastructures, tools, and processes in place and we want to be a good fit for those processes.

We wanted the product to provide customers with the same level of functionality that they have grown used to in traditional virtualization. OutSystems allows developers to rapidly deliver applications that can be easily changed. OutSystems provides reliable deployment and performs impact analysis, ensuring the whole factory is kept consistent throughout the application lifecycle.

We wanted the upcoming low-code microservices to work in containers just as well as they would work in virtualization. This is pretty obvious, really—new functionality should work seamlessly and effortlessly together!

The Beginning of a Long Quest

The first thing we did was a proof of concept. We picked up a random OutSystems application, detached its source code, and hacked our way towards putting it into a container. This allowed us to get a really good idea about the kind of work that we had ahead. We already knew that it would be a lot; now we knew what to look for.

We just needed to make it very clear for everybody.

The First Challenge: Routing and Addressing for Microservices

Right! So, I should probably say something about that.

This was when having a strong background in large-scale distributed systems came in handy. All I had to do was translate what was really obvious to me into something that would be really obvious to everybody else. This is not as hard as it sounds. The people I work with everyday always keep an open mind, and I really enjoy being part of that team.

That’s when our Zones became Deployment Zones. At this time they also gained an address, which is used by the platform to route back-end and microservice requests and a hosting technology, which allows a choice between container providers and virtualization. The platform doesn’t behave differently when dealing with containers, but some things need to be done differently in that case. Your operations team will be able to decide if the application lives in a virtual machine, in a container, or simply in the OutSystems server. Now, that’s freedom!

Zones started out as a logical aggregation of applications, allowing selective deployment of applications into servers. As Deployment Zones, they have become a bona fide concept in the language, one that enables applications to reach each other, without the developer having to know where they are located—a true cloud-native architecture!

And that’s when we came up with our reference network architecture for containers.

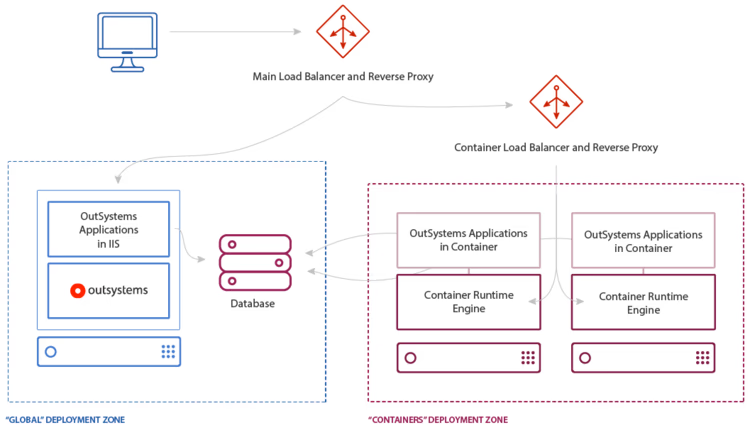

The “Main Load Balancer and Reverse Proxy” is the entry point for the environment. It exposes your environment domain, something like www.randomdomain.com. Then, based on the path suffix of the URL, it either forwards the request to your traditional virtual machines—the “Global” Deployment Zone in this case or to the “Container Load Balancer and Reverse Proxy.”

Provided either by your orchestrator in cooperation with your container engine’s ingress functionality or a third-party tool, the Container Load Balancer and Reverse Proxy knows which containers are running that application and is able to forward those requests to the appropriate containers.

All applications in the “Global” Deployment Zone can reach the ones in the “Containers” Deployment Zone using the address of that Deployment Zone, which is probably the address of the Container Load Balancer and Reverse Proxy artifact.

Don't worry; this sounds more complex than it really is. You probably already have someone with a great networking background in your team, so this will make sense for them.

The Second Challenge: Manual vs. Automatic

Having resolved the routing and addressing issues, it was time to move on to the experience we wanted to deliver. There were two contenders:

- An experience consisting of manual container operations, which would allow us to get feedback from our early adopters sooner but could be seen as a less-than-optimal experience.

- An experience centered on automatic operations and integrations with automation tools, closer to what our customers are used to getting from OutSystems.

We’ve started with the manual experience and ended up delivering both.

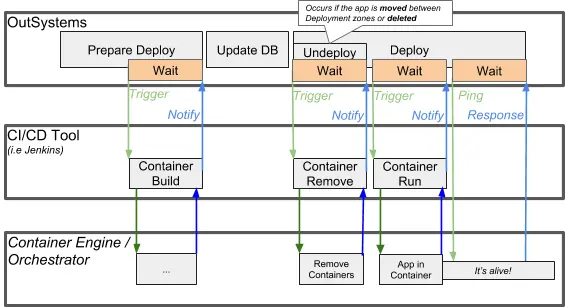

Why? Well, because you can use the manual mode to learn how everything works and fits together. When you are comfortable and want to deploy into containers in production, you can just automate everything, taking advantage of our automation hooks in the deployment process.

These automation hooks are just waiting for you to bring in your CI/CD mechanisms and tools—Jenkins, for example—and take advantage of them. It’s like building a bridge. You start at both sides at the same time, so that you can use the bridge itself to carry the materials for the center segments where the river is usually deeper. Smart, right?

OutSystems will provide you with all the assets that you need to build and run the container. Then you fill in the gap—either manually or automatically—between OutSystems and your container infrastructure. In the end, you can use OutSystems to manage your application lifecycle, just as you are used to doing, and your container orchestrator can manage the health and scalability of your containers.

Alright!

The Third Challenge: User Experience for Operations, Not Geeks

What do you get when you ask a server-side person to come up with a flexible user interface?

A command line.

It might be a command line with history, reverse-search, and auto-complete, but it will be a command line nonetheless. A command line is our favorite kind of interface; it brings great power. And we are willing to take the responsibility that comes with it.

Unfortunately, having to use a command line makes a lot of people angry and sad.

What do you get when you ask a server-side person to come up with a graphical user interface? A half-baked graphical user interface: you’d get a few buttons and if you’re lucky more than one color. It will probably work quite well for most common scenarios, degrade reasonably well for the edge cases, and most of the time it will not be that appealing to use. It will also make people angry and sad.

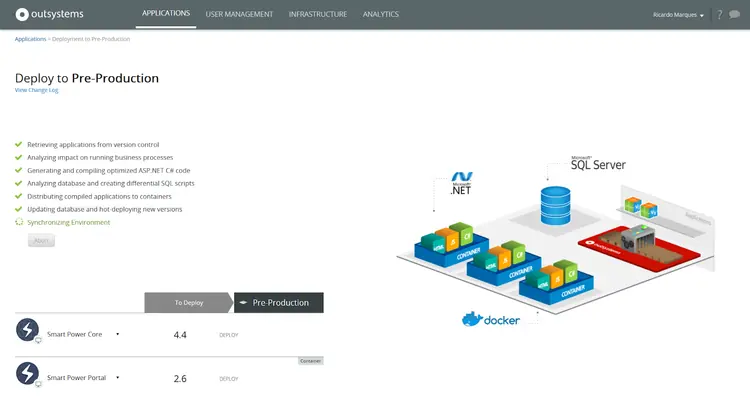

Fortunately, there are UI and UX designers. And we have access to a team of very talented ones. We don’t even have to go that far; they are sitting just across the hall. We can just go there, show them our proposal for a GUI designed by a server-side person, explain the concepts and voila! They give us a proposal that looks pretty, meets the requirements, and should be fairly simple and pleasing to use.

We’re quite happy with the result we’ve obtained!

The Fourth Challenge: Deploying Apps into Containers

So after addressing the UX situation, one question still lingered: can we deploy any app into a container?

The short answer is yes. But it wasn’t always like that.

We started out by only allowing server-side applications, meaning, applications without any kind of UI. We went this way because we didn’t want to deal with resource-referencing problems right at the beginning of the project.

The approach looked good, but it did not meet our goals. We knew we would have to revisit this limitation later.

When “later” became “now,” we figured out that we could easily support pretty much all kinds of applications without any significant extra work if customers would slightly tweak their infrastructure architecture so that it resembles our reference network architecture. That would be a one-time operation to benefit from all of the advantages of containers; that does not seem that bad.

So, how do you deploy an application into a container? Well, you just need to move the application into a Deployment Zone that supports containers and publish the application. And that’s something you do when you are staging the application.

Challenges Aside, Testing Time

Well, the story so far is quite interesting, but I’ve not yet said anything about the tests. And I think I should, because that was also part of getting us where we needed to go.

As always, we want to be sure that what we are building and delivering is error-free. Quality is part of what OutSystems stands for, so we can’t make something publicly available without ensuring it meets our quality demands.

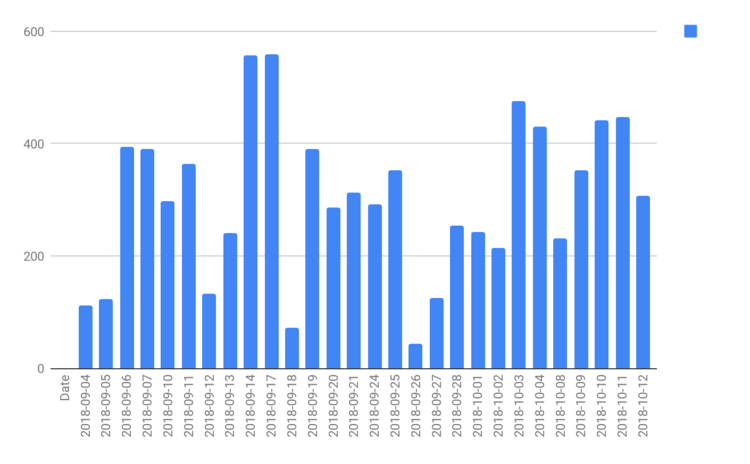

When it comes to containers, we wanted to be sure that the claim of portability also applies to OutSystems applications—so we had to run some of our apps in containers. And we wanted to be able to ensure this repeatedly.

As you might remember, we started out by having manual deployments. As you can probably imagine, since the whole experience was designed with manual operations in mind, using that experience to deliver automated tests was bound to make our lives a bit harder. It did, but then again if it doesn’t challenge you, is it worth doing? So we implemented our own automation on top of our manual operations.

How did we do it, you ask? Well, we’re R&D. We are server-side people, and the command line does not frighten us, so we hacked a solution using PowerShell scripts.

These PowerShell scripts retrieve the bundle generated by the platform, create a container image based on the contents, gather configurations, start the container, and adjust routing rules. Then, they inform the platform that all of these operations have finished successfully.

That’s the kind of stuff we do. And we do it frequently! No fear!

We have since started migrating those tests to take advantage of the automation hooks and our own Jenkins infrastructure, so that our test coverage is even greater. This is how we know you will likely not run into any issues caused solely by the application living inside a container.

The End is the Beginning

I know this is a really long blog post and it's packed with new concepts, but we're here to help if there's something you don't understand. All our work has enabled us to become really good at answering questions.

Speaking of work, we were almost overwhelmed by how much we did. So, we gathered our team and savored our success—at some really nice lunches and dinners that OutSystems treated us to.

That was our thank-you note for delivering amazing stuff! Having good food with great people makes us happy. Being happy feels good. Yes, we are human!

Do You Know What’s Better Than Being Happy?

Being happy because our customers are happily using the stuff that we created.

And how do we know that customers are happy? We ask them. And that’s precisely what we’re doing. Are you using containers with OutSystems? Then we’d like to hear from you!

Have you not yet given OutSystems a try? Well, you can - and it’s free! Go ahead. You will be helping us help you shape your future with OutSystems!

Do you want to learn more about containers in OutSystems? Check out our official documentation on the topic!