Houston, We Have a Problem: Empathy is Missing from Voice Assistants

Voice Assistants are the new dinner party showstoppers. Recent technological advances and novel approaches have pushed them to the spotlight, for good reason. Parties thrive as guests voice commands to the voice assistant.

“Lights off!” the lights are turned off. The dinner party guests are impressed.

“Turn on the lights!” the lights are turned back on. Everyone laughs.

“Play Alanis Morissette.” the music starts playing.

“Pause!” the music stops playing.

“What will the weather be like today?” the virtual assistant announces the weather forecast.

“How long is it going to rain for?” silence, nothing happens. Everyone leans forward.

“When will it stop raining?” the voice assistant says “Hmm, I’m not sure what you meant by that question.”

“Ok, please play Slade” the voice assistant says “Here’s a radio station you might like: Katy Perry…”

“I don’t want to listen to Katy Perry! Play Slade!” the voice assistant says “I’m not sure what you mean by that.”

It’s a great show when it works, and even when it doesn’t - at least it’s guaranteed entertainment. Voice assistants are actually very good for simple learned instructions, like direct commands or web searches. Not only that, with the emergence of all sort of IoT devices, they also create a world of fun, controlling all sort of devices from the other side of the room. They have reached a stage where they have become useful, for a limited range of tasks, but we are still reluctant to use them for tasks where accuracy and trust are necessary. In any relationship, trust is essential, to be useful we must trust the voice assistant’s understanding of our words, tone, and sentiments, in other words, our intent.

Do Voice Assistants Provide the Right Experience?

Voice assistant sales are going through the roof. And this raises the question: do they ensure the right experience?

We’ve seen in the past how an awkward and uncomfortable user experience can lead to the demise of a promising technology, we’ve seen it happen with 3D TV, a technology that is now officially dead. During the last part of this decade, with skyrocketing sales and sales projections, 3D TV seemed to be the next big thing. But it’s success was short-lived because it failed to provide the right experience.

For decades now, we have been turned off by the feeble attempts at voice recognition. Look at the infamous IVR systems. The ones used by call-centers that prevent us from solving your problems. But that will change rapidly.

Artificial intelligence is now making the difference in speech recognition. New deep learning techniques have allowed the field to overcome some of the previous challenges. Throughout last year, IBM, Baidu, Google and then Microsoft have surpassed each other in improving the accuracy of speech recognition. In their latest report, Microsoft announced lowering the error rate to an impressive 5.1 percent. This is already well below the average human error rate of 5.9 percent. Despite the fantastic achievement this represents, weirdly, it still translates to failing 1 word in 20. If we failed like this in real life, everyone around us would go mad. Humans do this differently. Humans leverage a lot of context. We look at the circumstances, our past experience, body language, tone and other clues to fill in the gaps and ensure effective and accurate communication.

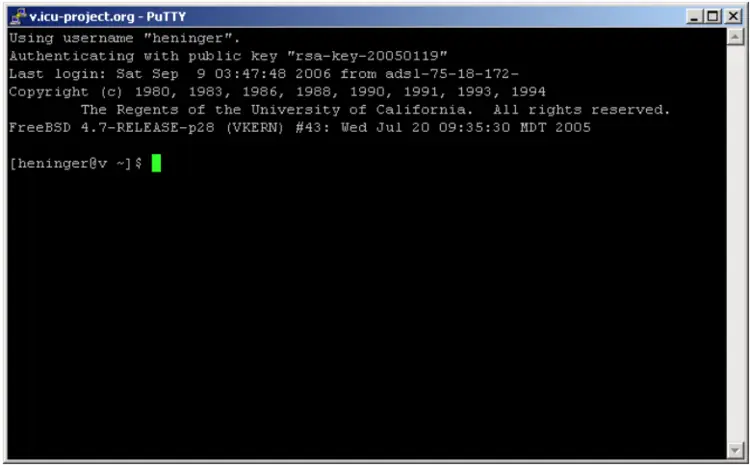

Today's voice commands need to be fairly short and appropriate language should be used to be correctly recognized and interpreted. Still, it’s not a simple experience. We need to remember the way instructions have to be verbalized. We are expected to move closer towards the machine, to reach out, to make an effort to be understood. And when the machine “doesn't get us” we feel frustrated. For me, coming from a developer background, it is reminiscent of sitting in front of a rudimentary computer with a command line blinking incessantly in front of me.

Voice Assistants Must Smarten Up, So We Don’t Have to

Jakob Nielsen, who published the somewhat famous Usability Heuristics, advocated for “Recognition rather than recall”, in other words, make it easy for the user, minimize the amount of information they need to learn and recall. But just like a command prompt, we have to guess, at first, what we might throw at this interface.

With frequent use, we recognize a set of instructions and proficiency starts to rise making us productive users. Through repetition, we developed the required mental patterns to use this system intuitively. But each new system requires a new learning experience.

Voice assistants can become intuitive and can be used with ease as long as users are forced to use them repeatedly. Sporadic use of voice assistants is not enough for us to recall the commands, and develop the required mental patterns.

Command lines were indeed a key step in the evolution of computing interfaces, but it was the invention of graphical user interfaces (GUIs) that revolutionized computing and lead to the mainstream use of computers. They required a key technological leap to achieve it.

So what will be the advance to catapult voice interfaces to a new level, overcoming the boundaries of human recall?

The Current Voice Interface Paradox

“It’s not surprising that 69 percent of the 7,000-plus Alexa “skills” — voice apps, if you will — have zero or one customer review, signaling very low usage” (source).

Here’s the paradox.

Voice interfaces are perhaps the most human-like interaction. It’s a technology advancement that seems familiar. How hard can it be? Just talk to the machine and it’ll understand you, right? But here lies the hard realization:

Currently, the most simple-to-use interface is the most difficult for beginners.

This will change, but it will not be easy to achieve. We can’t rely on visual interfaces to facilitate our communication with them, it’s still difficult, awkward and frustrating at times. New strategies are needed to become closer to us, to make it more intuitive.

The Missing Piece For Voice Assistants is Understanding You

Microsoft did exactly that. They used a new strategy in that last test, where it reached the speech recognition error rate record. In their own words they “strengthened the recognizer’s language model by using the entire history of a dialog session to predict what is likely to come next, effectively allowing the model to adapt to the topic and local context of a conversation.”

In other words, they increased context (just like humans) to fill in the gaps.

They realized that was the missing piece to make a breakthrough. For the interface to really work, it will need to be more empathetic. For voice interfaces to deliver on the promise they will have to understand us, learn our needs, our unique communication style, what drives us, how we think. They will need to fill in the gaps and read into what we are really saying, taking us at face value leaves out a lot of contextual awareness.

When we ask our assistant for more light we could be asking for a bright light that floods our workspace so we can work on some detailed task or we may be looking for a soft and warm light that helps us relax at the end of the day, and this was a hard one. Our voice assistant knows this based on current circumstances, our past behavior, our daily planner and because we just ordered a bottle of wine, or two.

This new strategy, supported by continuous advances in machine learning capabilities, supported by the undeterred evolution of computer processing power and an increase of sensory information from us and our surroundings will deliver on this promise.

To fully work, voice assistants will need to be provided with a lot more information about how our brains work. They will have to learn (deeply) about us, the effort will be on their side. They will have to learn the affecting circumstances, and most of all, what makes you...You.