6 Steps to Increase Accuracy of Test Automation

Testing is one of the most time-consuming parts of the development process and named by many as one of the main causes that slow down app delivery.

In its quest to help companies deliver applications fast, right, and for the future, OutSystems has partnered with Valori to define a unique new approach to testing applications. The goal is to help OutSystems customers accelerate their QA efforts and overall application delivery strategy.

In an article I wrote a few months ago, I focused on how Valori’s Core Layer Testing approach helps organizations speed up quality assurance and testing using OutSystems capabilities. By optimizing the testability of the code, Valori makes sure testers keep up with the speed of developers.

In this second article, I’ll focus on defining test cases for each test goal to ensure faster, more robust automated test cases with better coverage.

Ask yourself these questions:

- Is the time it takes your team to release a new functionality increasing?

- Are you investing more in keeping the lights on than on innovation?

- Do you have concerns about the risk coverage and maintainability of your test sets?

- Are your automated test failures not related to the goal of that test case?

If you answered “yes” to any of these questions, this article is for you.

6 Steps to Increase the Accuracy of a Test Case

Valori defines five steps to make a test case as accurate as possible:

- Refine the user story.

- Define your test goal and test cases.

- Determine your test steps.

- Focus on the test goal.

- Plot your tests on the Architecture Canvas.

- Use Test Data Management.

To explain these 6 steps, we’ll use the following user story:

“As a customer, I want to be able to choose between priority or standard shipment so that I can order products according to my needs.”

Step 1: Refine the User Story

Like the one shared in the previous paragraph, user stories are the input for a Refinement Session and should always be related to business requirements.

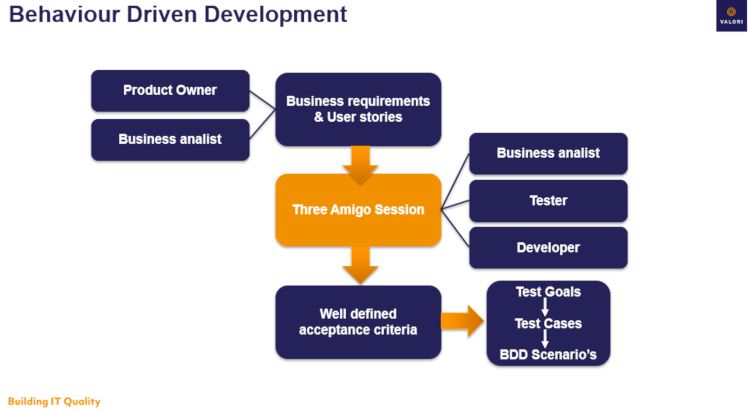

In Behaviour Driven Development (BDD), this Refinement is done in a so-called ‘Three Amigos Session’, where at least one Developer, one Tester, and one Business Analyst discuss the User Story. Each of them represents a different expertise.

The output of this session are well-defined acceptance criteria that everybody understands and agrees upon. These criteria can then be further detailed in test cases and BDD scenarios at a later stage. Eventually, these BDD scenarios are used in test automation to define test steps.

At the end of the Three Amigo session, everybody present in the meeting should know what is needed to implement the User Story.

Looking at the User Story above, there are quite a few questions a Tester needs answers to. We’ll pick the following example:

- What is the cost difference between standard and priority shipment?

These questions are typically asked during the Three Amigo Session and often result in new requirements and/ or acceptance criteria. The figure below shows an overview of this process:

After The Three Amigo Session, the User Story will be expanded with the following acceptance criteria:

Acceptance criteria 1: standard shipment costs 5$;

Acceptance criteria 2: priority shipment costs 10$.

Step 2: Define Your Test Goal and Test Cases

A well-defined acceptance criterion can often be translated into a test goal and a respective test case. Each of the test cases is related to a test goal and each test goal to a requirement. Only the most important test cases become part of the automated regression set:

Test goal: Verify if shipment costs are calculated correctly.

Test case 1: verify if standard shipment costs 5$;

Test case 2: verify if priority shipment costs 10$.

Step 3: Determine Your Test Steps

A common way to determine your test cases is through a “Given..When..Then” statement. In Behaviour Driven Development, this is called a BDD Scenario. For our test cases, this will look similar to this:

BDD Scenario 1:

Given that a customer has Book X in his shopping cart and selected standard shipment

When shipment costs are calculated

Then I expect shipment costs of 5$ to be applied.

BDD Scenario 2:

Given a customer has Book X in his shopping cart and selected priority shipment

When shipment costs are calculated

Then I expect shipment costs of 10$ to be applied

The BDD notation will eventually be used in our automated test cases to define our test steps. It will also serve as a feedback loop where the business can validate if the scenario verifies the requirement correctly.

Step 4: Focus on the Test Goal

The main difference between a manual and an automated test case is that a manual test case touches as many requirements as possible within one flow.

Using the scenario above, the manual test case would verify the shipment costs following these test steps:

1. Login as a customer; 2. Navigation to the book section; 3. Searching a book; 4. Adding a book to the shopping cart; 5. Select <<shipment option>>; 6. Verify <<shipment costs>>.

However, when looking at the test goal, the only step contributing to achieving it is step 6.

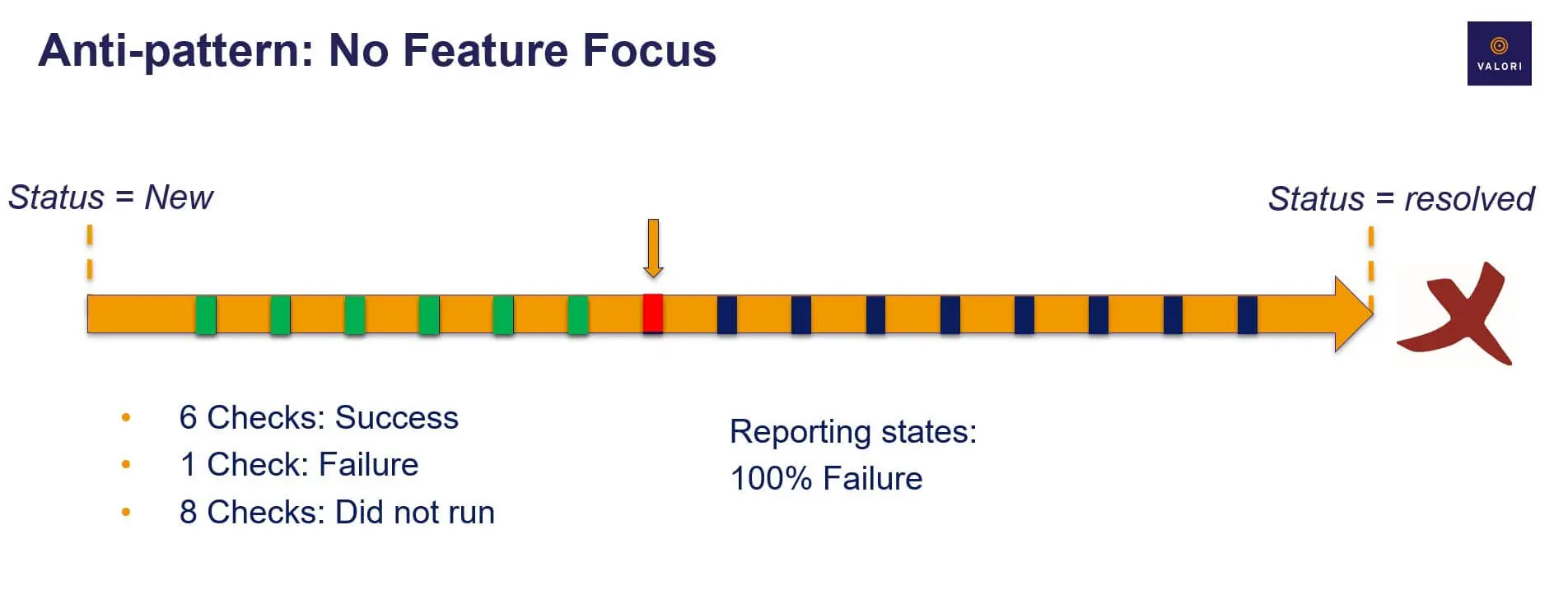

For automated tests, this approach puts at risk the quality of your test report and coverage.

If, for example, step 3 fails, the test case “Verify if priority shipment costs 10$” also fails. In reality, however, the functionality to search for a book has already failed and the calculation of shipment costs hasn’t even run.

Introducing Feature Focus Tests

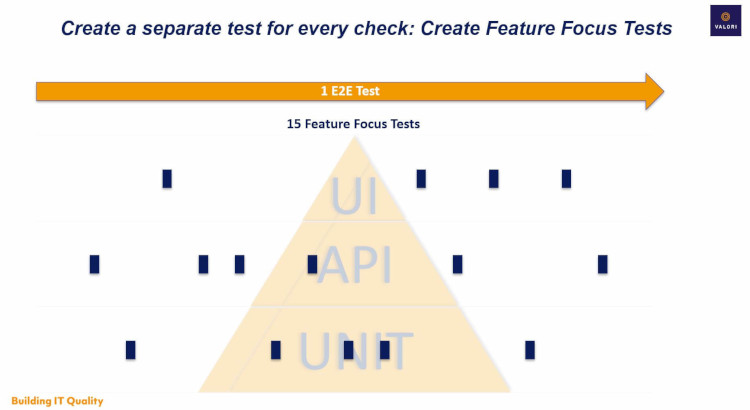

In automated tests, we can solve this problem by making a standalone, independent test case for every test goal. Valori calls this Feature Focus Tests. This will improve the robustness and maintainability of the automated tests.

Looking at the test goal “Verify if shipment costs are calculated correctly”, we need to find ways to isolate this logic for our test.

We want to avoid the dependency of steps 1-5 and test step 6 directly. The question you want to ask yourself here is:

- Do all the test steps in this test case contribute to the test goal?

Any test step that does not contribute to the test goal is a waste. Eliminating this waste rapidly decreases the time needed to analyze defects. The figure below summarizes this principle:

Step 5: Plot Your Tests on the Architecture Canvas

In the end, if we combine Feature Focus tests with the Core Layer testing described in my previous article, each Feature Focus Test within one User Story is performed on a different layer of the Architecture Canvas:

- Logging in and navigation steps are performed on the End-User Layer;

- Searching product information is done via the Foundation Layer that integrates with a product catalog module or API;

- Calculating shipment costs will call a specific action in the Core Layer.

After running all specific Feature Focus tests, we finalize it by running one End-to-End test case to validate the screen flow. Looking at the Testing Pyramid, this looks like the figure below:

Service-Oriented Architecture is Key

To be able to use the above approach and fully speed up test execution, a service-oriented architecture is key. Feature Focus tests can only run independently from each other if the logic is set up in such a way as well.

Like Core Layer Testing, Feature Focus tests benefit from the structure described in the Architecture Canvas. Specific business logic should be steerable independently from data or other logic in your application so that all these specific functions can be targeted separately.

Without a service-oriented architecture, your tests will always be dependent on multiple preconditions, navigation steps, and setup scenarios which exponentially increase the execution time of your tests. This opens up risks related to test stability and the quality of the test report and coverage, as shown in step 4.

Step 6: Use Test Data Management

Ultimately, in many cases, specific tests require a specific test data set. This is the final step in setting up a successful OutSystems testing strategy. There are multiple ways to achieve this: like synthetic test data generation, data subsetting (and anonymization), and mocking. We’ll talk about Test Data Management in our next article.

If you’ve had problems in maintainability and robustness of your test sets, and if you often run into failed test cases that never reached the actual test goal, we hope this article gave the right direction for solving this issue.

If you have any questions related to this topic, feel free to contact me at BrianvandenBrink@valori.nl

About Valori:

Valori is OutSystems trusted Partner focusing on Quality Assurance and Testing. Based in Utrecht, The Netherlands, Valori worked together with OutSystems Product Development teams to define a unique approach in testing OutSystems applications. This approach is based on multiple Best Practices both OutSystems and Valori have gathered over the span of the last 5 years.