You Can't Have Fast Development

without Fast QA

At Valori , we have been working with the OutSystems product development team to define a unique new approach to testing OutSystems apps. The goal is to help OutSystems customers accelerate their QA efforts and overall application delivery strategy.

Ask yourself these questions:

- Does it take your team more and more time to release new functionality?

- Are you investing more in keeping the lights on than on innovation?

- Do you have concerns about the risk coverage and maintainability of your test sets?

If you answered “yes” to any of these questions, this article is for you.

“Tech First, Test Second”

In a world that depends on trustworthy information and technology, we at Valori believe that to innovate without hassle, it is crucial to assure the overall quality of your technology stacks. Therefore, for OutSystems, we have a unique approach called “Tech First, Test Second.”

Supporting this approach is our in-depth knowledge of the technology stack we use for development. This knowledge has allowed us to create exclusive testing solutions specific to OutSystems, start a dialogue with the development teams and create ultra-short feedback loops.

The Power of Fast Feedback

Testing activities need to keep up with development. Testing needs to leverage OutSystems the same way as development does. Only when the feedback is fast and directed to the problem can the team fully embrace it. This feedback builds trust so that both existing and new functionality work as desired and that integrated systems have a stable level of quality.

Automated Feedback Is an Absolute Must

When developing software incrementally, automated feedback is an absolute must.

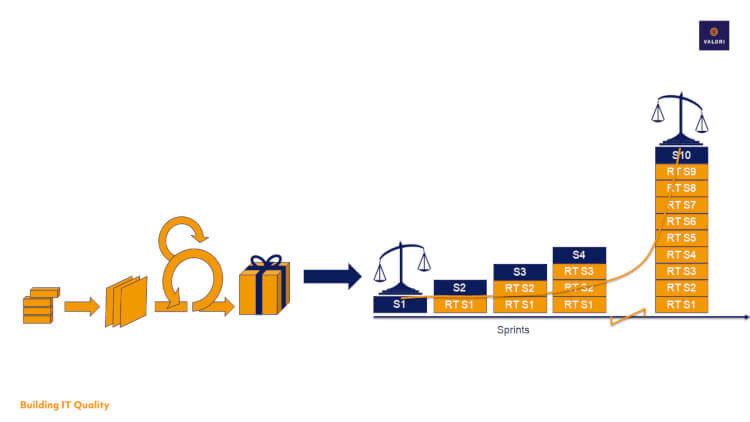

Take a look at figure 1.

In most projects, a sprint (left side of the figure) contains new features to be developed (S1). In the second sprint, new features are added (S2). The 10th increment will still have a similar amount of innovation (S10), with maybe some extras because of an increased velocity. However, the previous 9 increments will have to remain in production as well (RT S1-S9). Verifying this manually will drastically decrease the speed of the release cycle. Therefore, if you want to continue innovating, automated regression tests are essential.

The OutSystems Architecture Canvas and the Agile Testing Pyramid

The Agile Testing Pyramid describes the levels on which automated testing can be performed. The key message here is: that lower levels of the pyramid are faster and more robust to test, and therefore, less costly.

The Architecture Canvas is an OutSystems architecture tool designed to make Service-Oriented Architecture (SOA) simple by promoting the correct abstraction of reusable (micro)services and the correct isolation of distinct functional modules. The combination of the two forms is the backbone of our OutSystems testing strategy.

Abstraction and isolation of distinct functional modules is key to accelerate testing activities to the lower levels of the Agile Testing Pyramid. Looking at both the Agile Testing Pyramid and the OutSystems Architecture Canvas gives us insights into which modules of the OutSystems applications are testable and at which level of the pyramid.

For this, take a look at figure 2:

Testing business logic is done on the core (logic) layer primarily via units and APIs. Internal services and integrated systems are tested via APIs on the Foundation and Core layers of the application. Testing User Journeys and process flows via the User Interface is done on the End User Layer. Most test effort is spent on the lower levels of the pyramid, leaving the End User Layer to be covered by a maximum of 1 or 2 End-to-End tests per User Story.

The OutSystems BDD Framework

The BDD Framework is a test framework within OutSystems Service Studio and uses a BDD syntax to directly test business logic present in Server and Service Actions. For the BDD Framework to work, you need to follow the Architecture Canvas guidelines when developing.

We indicate four critical success factors for test automation as follows:

- Speed of execution

- Robustness

- Maintainability

- Risk coverage.

Many test automation projects fail because one or more of these success factors are not met. Looking at these success factors, the BDD Framework has pros, and cons:

Pros:

- Robustness

- Speed of execution

Cons:

- Maintainability

- Risk coverage

Good maintainability and risk coverage is challenging because:

- Expected results are hard coded.

- Data-driven tests are either also hard coded or use an Excel template, but cannot be carried dynamically during runtime.

- Setup and teardown scenarios can become quite complex.

For extensive scenarios, these three things make the framework less flexible and rather time-consuming to implement. This is why we recommend that organizations mainly use it for basic unit test scenarios. This will provide the fastest feedback possible. For scenarios with more instances (like data-driven tests) or tests at higher levels of the pyramid, we recommend an additional solution.

Core Layer Testing by Valori

We’ve worked together with OutSystems to create Core Layer Testing, a solution complementary to the BDD Framework. The Server and Service Actions in the Core Layer are leveraged by this solution and allow testing units, the integration between units, APIs and even the usage of these Actions in end-to-end tests.

With little impact on precious development time, this makes sure test sets are maintainable, no matter how often you release a new functionality. This way, you make sure test execution is fast for all layers of the application and the test coverage is maximized. This will eventually lead to higher quality apps.

We have seen many projects where test automation is mainly done by steering the UI of the application, resulting in slow, error-prone tests. With Core Layer Testing, we changed this. We use OutSystems to increase the testability of our apps. We focus on the business logic and bypass the UI as much as possible. Quality becomes a team responsibility by optimizing the testability of the code.

Step 1: Accessibility

To make Server and Service Actions accessible to the outside world, you need to wrap the Action(s) into a REST API. In this wrapper, you shouldn’t implement extra logic for the Action(s) to run because, to be meaningful, the test should run against the original logic. The team member creating the test should be capable of knowing what Actions need to be exposed. Creating this REST API is standard OutSystems functionality and shouldn’t take more than a few minutes.

Best practice is that the exposed REST APIs created for Core Layer Testing are inside a dedicated Module. This Module can then be prevented from being deployed to production. Because of this, basic security measures are sufficient. This Module will have no strong dependencies to other Modules since it will only reference the existing Actions. Eventually, since creating those REST APIs should be a fairly simple wrapper of the original action, any team member should be able to create and maintain those APIs, restricting the impact on precious development time. Figure 3 explains this setup:

Step 2: Taking Control of Test Data

The main purpose of this step is to make sure the test is in control of the data in the Action during runtime. Data that is used inside the Action and that possibly impacts the outcome of the Action should always be steerable through the input of the Action. When this is set up correctly, the test can run completely independent of any test data. The team member creating the test plays an important role in determining what data is crucial to create meaningful tests.

Step 3: The Expected Result

The wrapper gets the same input parameters as the Action(s) that is (are) wrapped. This makes it possible to send any kind of data to the Action. The wrapper also gets one output parameter called “Result.” This output parameter can be a Structure, if multiple values need to be passed. A Structure also provides a nice layout to the response body. If multiple Actions are chained, it’s possible to test the integration between them by getting data from one action, and using that in the next action. Verifications are done on the output parameter of the wrapper.

Step 4: End-to-End

When all business logic is verified to be working as expected, the integration between units are tested, as well as the interfaces with the available API’s. Two or three End-2-End tests are sufficient to verify the process as a whole. Here, we just verify the correct navigation between screens and make sure the UI interacts with the correct business logic.

Invest Early, Harvest Later

If you do not take the needed measures for testability, it will come back to haunt you in the end. The use of Core Layer Testing asks for a different approach in development, and it is very likely that some technical design decisions need to be made in favor of testability. This might result in extra features that are, at first sight, not needed to fulfill the business requirement. However, eventually, the goal is to create high quality apps. For this, you need to be able to continuously verify if existing and new functionality is working as desired. This assurance will let you innovate faster, because it will help you identify the impact of your changes.

Figure 4 shows how these design decisions can be made:

We’ve seen multiple examples where UI tests took over two minutes per test case to run because of the page navigation and waiting times. For a 100 test cases, this is over 3 hours of waiting on feedback.

These tests also failed quite often because of browser dependencies. With Core Layer Testing in place, these test cases took less than 5 seconds per test case to run, because the complete validation is done in one independent API request. This also invited the team to run the tests more often and even made it possible to include the tests in an automated CI/CD pipeline. The short waiting time doesn’t allow us to start working on something else either and creates focus on getting things done. As a result, code quality improves on the first increment.

Next Steps

This article showed the most important part of how we think testing OutSystems applications should be done. The next steps involve thinking about what Actions to focus on and choosing the correct tools to build your automated tests with. Making an API for every public Action is probably too much to ask for, so what Actions could be chained together? What test data is needed to perform all the needed combinations and how can we leverage Test Data Management tools for this? We’ll talk about these things in our next article.

If you have any questions related to this article, I'm glad to help you out! Just reach out to me at BrianvandenBrink@valori.nl.

About Valori:

Valori is OutSystems trusted Partner focusing on Quality Assurance and Testing. Based in Utrecht, The Netherlands, Valori worked together with OutSystems Product Development teams to define a unique approach in testing OutSystems applications. This approach is based on multiple Best Practices both OutSystems and Valori have gathered over the span of the last 5 years.