Ideation of an IDE: Changing the Wheels of a Moving 12-Wheel Truck

For the last two and a half years, the R&D Team at OutSystems has been busy. Very busy. Aware that the market share of macOS users had been growing and that our integrated development environment (IDE), Service Studio (which was originally built for Windows), did not provide these OutSystems developers the excellent experience we’re renowned for, we embarked on the serious mission of building a cross-platform IDE.

In software development, talking about changing the wheels while the car is in motion is a fairly common metaphor. This, however, felt like we were driving a 12-wheel truck. Our product has been evolving for 20 years now, distilling into roughly several million lines of code, handcrafted by hundreds of engineers, in a very organic company with a steep year-on-year growth and many unavoidable organizational changes.

Always brimming with technological breakthroughs, the market also keeps pushing for continuous improvements and the delivery of new capabilities to meet its demands. The insurgence of mobile apps, cloud computing, artificial intelligence, and machine learning advancements are just a few examples. These factors, amongst others, contributed to the ever-growing complexity of our IDE, the customer-facing side of the platform.

While the transformation project we embarked on did not imply a complete overhaul — we didn’t have a plethora of new functionalities to boast about — it involved a massive migration and a thorough visual revamp, significantly improving our product from a user experience standpoint. Plus, it comprised numerous infrastructural changes, as our Service Studio paired well with the Windows operating system for the past few years but now had to achieve the same seamless liaison with macOS.

So, the requirements were straightforward, the vision was clear. Before, Windows. After, Windows and macOS, under the shape of a cross-platform. It was time to roll up our sleeves and get to work. In this blog post, I’ll focus on the strategies we used to change the wheels of this moving 12-wheel truck and progressively roll out new functionalities without ever disturbing our passengers, also known as Service Studio users.

To Reuse, or Not to Reuse, That Is the Question

Just like there are infinite ways to tie two points together, there are many ways to tackle these endeavors. It all comes down to setting the right principles, weighing the pros and cons of several different approaches, and in the end, making informed decisions along the way.

The cross-platform IDE represented, in reality, a new product, which we were aware would take months, if not more, to be complete. We invested so much of our time into this that we didn’t want to take a “big bang” approach. There was no way we would allow ourselves to get trapped into a waterfall-style methodology and deliver an underwhelming product after so much hard work. Agile comes as second nature to us, and it’s not only about preaching but also exercising it to its full extent, regardless of how complex the task may be.

So the real question is: how could we incrementally deliver value in an agile fashion? How could we shift left the feedback on the changes we were performing on our product, thus mitigating the risk of breaking everything?

Simply put: we decided to seamlessly integrate the new code into the existing Windows version and release it to our customers every week. One could argue that no real value would be delivered to customers, as only the IDE guts would change. Besides some foreseen UI tweaks and restyling, customers probably wouldn’t be able to grasp much more. And I can relate to that.

Now, what about the internal value? For our teams and the project itself, it would be nothing short of incomparable. Such vision was supported — and made possible — because a large chunk of the code could be shared and reused by both old and new IDE versions. Given that the codebase would be joint to a certain extent, we adamantly refused to work on different repositories.

“Why?” you might wonder.

Well, first, we followed a trunk-based development model inside Engineering for years. Regardless of its advantages and drawbacks, we learned and matured our processes around that model throughout the years. An overwhelming transformation project was seen just like any other smaller project, say a new shiny feature in the IDE. We felt prepared for the challenge.

Secondly, having multiple repositories entailed considerable overhead in doubling the solutions, doubling the merges, doubling everything, basically. Ultimately, our main release line, the Windows-specific IDE, continued to evolve in the hands of many developers, who consistently delivered new features and bug fixes.

The Almost Identical Twins

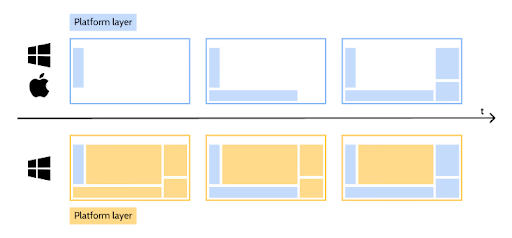

The approach above ensured there was no need to switch, merge, or validate different solutions. It meant, however, that we had to create a way to produce two separate desktop applications out of a shared repository.

So the real challenges began. It was time for the first major step: to create two Visual Studio solutions and transform the code to support both the .NET Framework and .NET Core, which we managed to do successfully with the help of some tools, including the Amazon Web Services Porting Assistant. As a result of this migration, and if you’ve been reading about our saga, I already told you about the fun time we had upon realizing our regression tests weren’t running. How’s that for a “good” surprise?

The most noticeable differences between both solutions were the platform layer that connected our runtime to each operating system and the visual revamp, given the transformation from WPF to ReactJS. Roughly speaking, this translated into importing different project files (*.csproj) in each solution for those specific areas of the code.

As a result, every commit made in the common areas of the code affected both versions. Changes performed in the main branch would be integrated by our CI/CD pipeline, which was responsible for — among other operations — building, packaging, and testing both Windows and the cross-platform versions.

This option came with the apparent risk of introducing regressions but largely compensated for the fact that we could release our changes progressively and get feedback from real customers using the product on a daily basis. We kept releasing weekly batches of increments validated by our Windows users, as we acted promptly on any unwanted faulty behavior. An additional effort was put into performing regular exploratory testing on each updated piece so that we could identify any issue as soon as possible.

In essence, we relied on two giant pillars: massive shift left and focus on lead time to recovery.

Branching It Softly

While this pretty much sums it up for common areas of the code, the two remaining pieces were purposely left out: the platform layer and the earlier mentioned visual revamp.

The logic supporting all macOS-related operations was pretty much specific to each version, meaning it was not interchangeable. In other words, progressive disclosure of the infrastructural layer was not possible at all. The runtimes (.NET Framework versus .NET Core) and UI Frameworks (WPF versus Avalonia) were by no means compatible. We had no option but to wait until the first minimum viable version of the cross-platform IDE was available to get down to business.

The visual sophistication of the IDE was a whole different story, though. Efforts creating a new browser engine to display React/Web-based dialogs and the migration of the dialogs themselves could, in fact, see the light of the day sooner. Like common parts, they could be progressively shipped to our Windows version to increase our confidence and incrementally deliver value in a long-lasting transformation initiative.

Unlike common parts, this entailed something called branch by abstraction. Martin Fowler defines it as “a technique for making a large-scale change to a software system in a gradual way that allows you to release the system regularly while the change is still in progress,” a.k.a. changing the car wheels while in motion.

Generally speaking, it revolves around creating abstraction layers that represent our system’s particular set of capabilities. These layers can then be implemented by specific suppliers (or providers). At first, the only supplier is the one we currently use and wish to deprecate. But as soon as a new implementation is ready — and because we slowly replaced all supplier usages by the abstraction layer — we can seamlessly swap between them, allowing for progressive disclosure of these suppliers, one by one.

Abstractions, however, come at a cost, especially in a product like ours. The gigantic elephant, which needs to be sliced, naturally accumulated legacy code throughout the years. We didn’t initially think it would provide different implementations in some of these areas. So it’s indeed one of those cases where you need to learn how to walk before you start running. Or, if you prefer, you first need to slow down before you can speed up. And so we did.

We focused on refactoring some of these layers and allowing different providers to supply their own view implementations, freeing teams to tackle them piece by piece, dialog after dialog. Dialogs vary in complexity and, therefore, in the time they take to complete. We estimated we would finish some in a couple of days. Others, most complex, could take up to three months.

As the teams progressed and delivered smaller sections, these were also made available to end-users in new releases of the Windows version. You see, not only are we fanatics in shifting left feedback, but we are also very keen to shift right as much as possible.

“They are crazy,” you might be thinking.

Well, not quite, as there is some sauce to it. The key in such progressive rollouts is knowing exactly how large your target population is and having fine-grained control over how users are exposed to the new code. Start small, think big.

Feature toggles were a pivotal mechanism in this approach, providing the proper infrastructure to manage execution flows and wire either old or new behaviors according to a predefined set of conditions, including:

- Nature of user

- Environment

- Version

By shipping code sprinkled with such toggles, we could silently switch the code on and off without the user noticing it and collect the required feedback on new developments. Should any outstanding issue arise due to these new developments, we could instantly fix it by turning the corresponding feature toggle off. How’s that for a lead time to recover from a problem?

We started by collecting feedback from our R&D Team. When we felt confident that a particular section was okay, we moved to other departments. Then, we started passing it on to our dear MVPs (most valuable professionals), slowly increasing the scope until we were finally confident enough to set the new behavior by default to every user. We’ll deep dive into our Early Access Program in a future article, so watch this space.

Pull Out the Dirty Tricks

Ideally, we should always work with such abstractions, as they represent the cleanest, most elegant solution to the problem at hand. Occasionally though, that might not be feasible at all, as there are differences that surpass language capabilities.

It also comes down to the effort you would accept putting into refactoring a specific area of the code to be able to apply the same recipe. Abstraction layers are sometimes tough to come by, especially when dealing with legacy code. Like any other software product in the world that has endured for two decades now, we have some.

Ultimately, the shorter path between two points is indeed a straight line. And despite being perfectionists by nature, we also excel in applying the Pareto principle, which is present in The Small Book of a Few Big Rules, the backbone of our culture at OutSystems. Many times, 80% of the consequences come from 20% of the causes.

That is why we used other not-so-pretty mechanisms, such as conditional symbols and code compilation guidelines, which albeit imperfect, are straightforward to use, helping us achieve the desired outcome in a simple way.

By using different MSBuild configurations for both versions — Windows-specific and cross-platform — we could isolate specific NuGet dependencies that could not be common to both solutions, like third-party libraries that did not support .NET Core at the time. Off-the-shelf default preprocessor directives provided by Microsoft allowed for inline differences in common files, due to the different target frameworks used by our solutions.

Another frog that became a very pretty and efficient prince was duplicated code. We know, we know, we were venturing out of DRY land, but sometimes refactoring code comes with the huge risk of introducing regressions. Providing the right abstractions and ensuring the adequate segregation of responsibilities in infrastructural, legacy code can become a huge liability.

In a perfect world, our batch of automated tests would be able to pinpoint any of these incongruences ahead of time, but you know… that’s not always the case. And ultimately, any decision you make must take your customers into account. It might not be the most flashy or elegant solution at hand, but if it shields them from any unforeseen consequences arising from your changes, it might be worth it. The value of trust is immeasurable and you cannot, or rather, you must not, break it.

Dirty Tricks Come With a Price

Albeit super effective, dirty tricks come with a price, and it’s called technical debt. In a way, dirty tricks are bandaids. They provide a transient, temporary workaround for a specific problem that later on needs to be revisited. Whenever your solution becomes stable enough, often you need to go through your code base and clean up all ramifications and exceptions.

Like everything else in life, there is a balance. It’s all about finding that sweet spot between what would be desirable from a technical standpoint and what effectively makes sense in order to deliver value to customers. You might be changing the wheels while the truck is in motion, but, for the sake of your passengers, you don’t change them all at the same time.

However, this major lesson learned came with its own challenge: as we walk this fine line and progressively roll out changes in our IDE, how do we ensure the quality of these changes? How do we get feedback from users and derive improvements from it? Our Early Access Program, which we mentioned earlier, was a massive part of such a process, one that we will get into on the next pitstop of this journey.